By Mauro Pereira

Data governance in banking has matured for compliance, but not for value. In this IvyInsights piece, Mauro Pereira breaks down what a decade of regulatory-driven governance has taught us, and why the path forward requires embedding governance into decision-making, not building it as a parallel control structure.

The financial services industry has long been considered a reference point for data governance. Heavy regulation, complex operating models, and a strong risk culture have forced banks to invest early and deeply in data management, data quality, and governance structures.

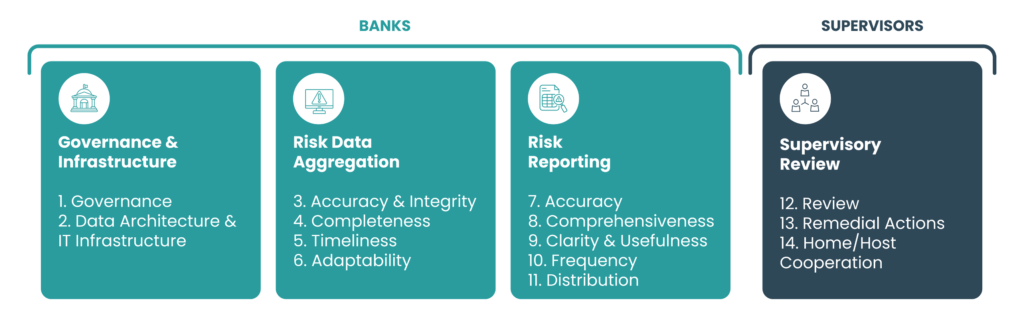

Frameworks such as BCBS 239, focused on risk data aggregation and risk reporting, have fundamentally reshaped how large financial institutions organise their data, define ownership, and control quality.

Developed in the aftermath of the 2007–2008 global financial crisis, the Basel Committee on Banking Supervision identified that many banks were unable to aggregate risk exposures accurately, completely, and in a timely manner, particularly under stressed conditions. These deficiencies were not limited to technology immaturity: they reflected fragmented data architectures, inconsistent data definitions across business lines, weak governance structures, and insufficient board-level oversight. BCBS 239 therefore formalised a clear expectation: effective risk data aggregation must be underpinned by strong governance, clear data ownership, robust IT infrastructure, and embedded data quality controls.

The publication of BCBS 239 marked a turning point by formalising supervisory expectations around governance, risk data aggregation, and reporting capabilities. Although regulatory in origin, its impact extended well beyond compliance. It accelerated a broader institutional realisation: data could no longer be managed in silos or treated as a secondary by-product of operations, it required structured, enterprise-wide discipline.

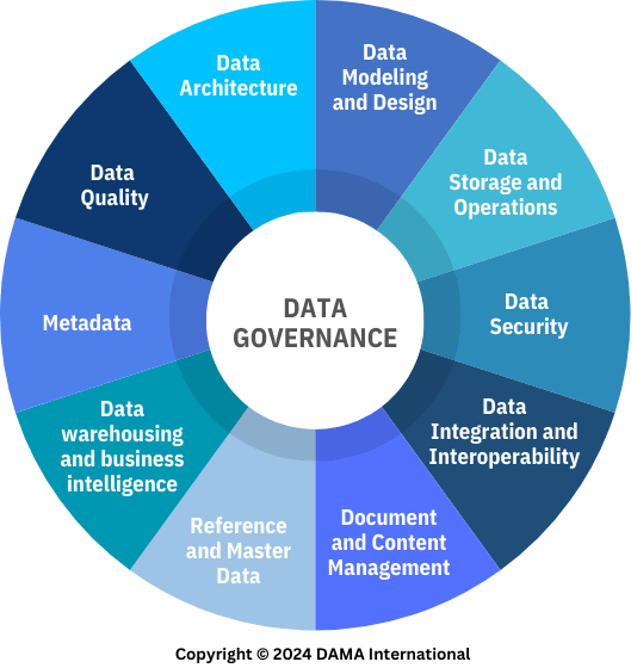

In parallel, industry frameworks such as the Data Management Body of Knowledge (DAMA-DMBOK), published by DAMA International, provided a more holistic and vendor-neutral articulation of that discipline.

While BCBS 239 focused primarily on risk data aggregation and reporting, DAMA-DMBOK defines data management as a multidimensional capability encompassing governance, architecture, data quality, metadata, security, master and reference data, and lifecycle management. It positions governance as the central coordinating function that aligns organisational capability and technical infrastructure, reinforcing organisational accountability across the enterprise.

As illustrated by the DAMA-DMBOK Wheel (Figure 2), data governance does not operate in isolation. It functions as the central coordinating capability that aligns architecture, quality, metadata, security, and operational disciplines within a coherent enterprise data management framework.

Together, regulatory pressure and industry standards significantly elevated the maturity of data governance within financial institutions. Banks now operate with formalised data ownership models, structured quality controls, governance forums, and documented policies that would have been uncommon prior to the financial crisis.

When effectively implemented, strong data governance enables far more than regulatory compliance. It provides the foundation for advanced risk modelling, real-time exposure monitoring, and more granular stress testing. It supports the development of data-driven products such as personalised lending, dynamic pricing models, and embedded finance solutions. It strengthens fraud detection capabilities through integrated cross-channel data, enhances customer analytics, and enables more accurate capital allocation decisions. In essence, mature data governance transforms fragmented information into a strategic capability that drives innovation and competitive differentiation.

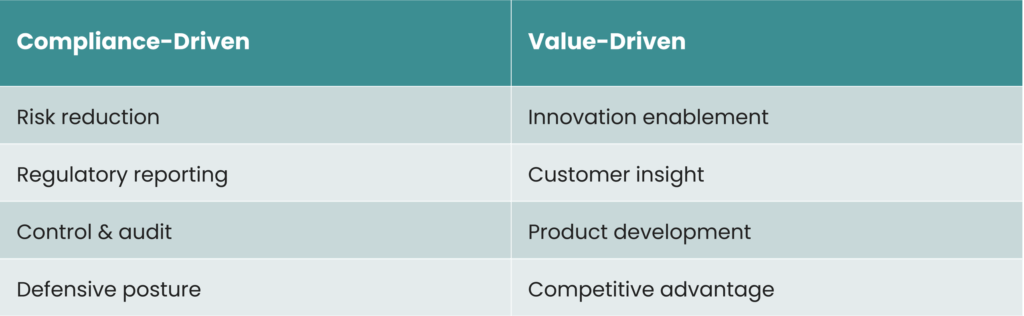

And yet, a structural paradox remains: banks have learned to govern data exceptionally well for compliance and regulatory assurance, but far less effectively for strategic decision-making and value creation.

The gap is widening.

Data governance is often strongest where regulatory exposure is highest (capital, liquidity, and supervisory reporting) yet comparatively weaker where competitive advantage is built: customer insight, product innovation, and operational optimisation.

Banking as a School of Data Governance

BCBS 239 forced banks to treat data as a strategic asset long before “data-driven” became a mainstream ambition. To comply, institutions were required to define clear data ownership, implement robust data quality controls, invest heavily in infrastructure, and establish formal data governance organisations.

This effort has been substantial and sustained. Over more than a decade, large multinational banking groups have allocated significant financial, technological, and human resources to address BCBS 239 requirements, often engaging in continuous transformation of their data and IT capabilities.

According to the BCBS 239 Benchmark Survey 2024 (BCBS 239 Benchmark Survey 2024, Deloitte 2024), many institutions remain engaged in large-scale, multi-year remediation programmes. These initiatives are frequently triggered by high-severity supervisory findings, particularly those linked to fragmented IT landscapes and complex data architectures identified during on-site inspections. On average, remediation programmes span approximately three years, with some extending to five years or more to achieve sustainable compliance.

Despite more than a decade since the principles were introduced, supervisory authorities continue to express dissatisfaction with overall implementation progress. A significant number of systemically important institutions have yet to achieve full compliance. This persistent gap underscores the operational, architectural, and organisational complexity of implementing truly integrated data governance frameworks at a global scale.

Even as financial institutions have built strong governance foundations, they face a new layer of complexity: the rapid evolution of technology. The shift toward decentralised data architectures, cloud migration, and domain-oriented models challenges traditional governance assumptions. Concepts such as “single source of truth” become more nuanced in distributed environments, where context-driven ownership models, such as those promoted by Data Mesh approaches, redefine authoritative sources as domain-level data products rather than a single centralised repository. Each domain becomes the definitive owner of its data within a bounded context, maintaining authority while enabling federation.

These technological evolutions create tension not only within institutions but also between institutions and supervisors. Regulatory expectations are often framed around traditional architectural paradigms, while banks are increasingly adopting more flexible and decentralised models. As a result, innovative governance approaches may require additional explanation, evidence, and assurance to demonstrate that control, traceability, and data quality are not compromised. In many cases, the primary objective of data governance remains satisfying supervisory scrutiny, potentially slowing the adoption of more transformative architectural patterns.

The Cost of Governance and the Cost of Not Governing

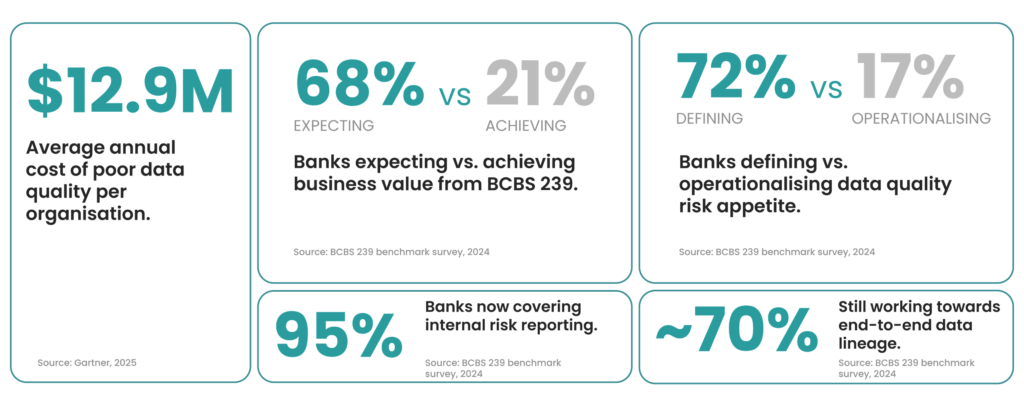

The cost of not governing data is demonstrably higher. According to Gartner research, poor data quality costs organisations an average of USD 12.9 million per year due to operational inefficiencies, rework, flawed decision-making, and missed business opportunities, illustrating that low-quality data undermines both compliance and business performance.

At a broader economic level, poor data quality has been widely estimated to cost trillions of dollars in lost value annually. In the US alone, this figure reaches $3.1 trillion per year, reflecting the systemic impact on productivity and growth (IBM, 2020).

Non-compliance with data-related regulations tends to incur significantly higher downstream costs, including regulatory penalties, extensive remediation efforts, operational disruption, and reputational damage, than the cost of maintaining robust governance and quality controls.

This reality helps explain why regulated industries, particularly banking, have embraced data governance as a necessity rather than an option.

Well-Governed Data, Limited Value Creation

Despite relatively mature governance structures, many banks still struggle to:

- Monetise their data assets;

- Develop data-driven products beyond regulatory and management reporting;

- Fully leverage advanced analytics and artificial intelligence.

Governance in banking remains predominantly defensive, focused on risk reduction, compliance, and control. While this discipline is essential, it often limits the ability to reuse governed data for innovation and growth.

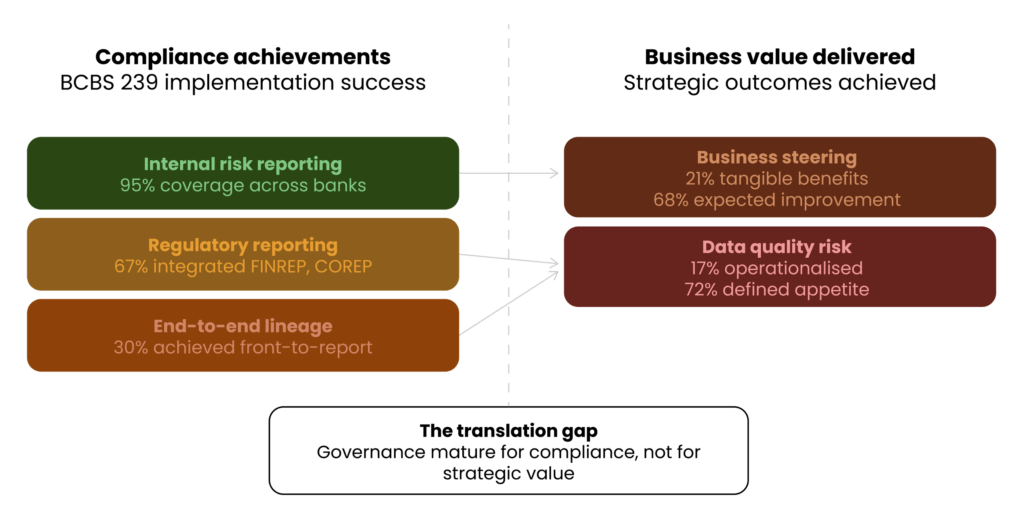

While BCBS 239 has clearly improved risk reporting and data consistency, its impact on broader business value remains limited. Although 95% of banks now cover internal risk reporting, important gaps persist: around one third have yet to fully integrate regulatory reporting frameworks such as FINREP and COREP, and nearly 70% are still working towards end-to-end data lineage from front office to reporting.

The gap between ambition and outcome is even more evident when looking at value creation. While 68% of institutions expected BCBS 239 to improve business steering, only 21% report having achieved tangible benefits so far. Similarly, despite 72% of banks defining a data quality risk appetite, just 17% have operationalised it effectively. These figures highlight a persistent reality: data governance in banking has matured for compliance, but translating it into strategic, value-driven outcomes remains a work in progress (BCBS 239 Benchmark Survey 2024, Deloitte 2024).

Data Governance as a Foundation for AI

If the cost of poor governance is measured in financial and operational losses, its strategic value becomes most evident in the context of artificial intelligence. High-quality, well-governed data is not merely beneficial for AI, it is foundational. Without trusted, consistent, and traceable data, AI models do not generate insight; they amplify inconsistency and error.

In many ways, the banking sector is structurally well positioned for AI adoption precisely because of its long-standing investment in data governance. However, adoption remains cautious and risk-aware. Most current use cases focus on controlled environments, such as:

- Improving internal processes;

- Automating controls and reconciliations;

- Enhancing fraud detection and risk monitoring.

These applications align closely with existing governance, auditability, and risk management frameworks, making them natural extensions of established compliance capabilities.

The next frontier, leveraging governed data to design new products, hyper-personalised services, and interconnected data-driven ecosystems, presents a more complex challenge. It requires not only reliable data foundations, but also a cultural shift: from governing data primarily for supervision, to governing data for innovation.

Industry thought leadership reinforces this connection. IBM, for example, highlights data governance as a critical enabler of trustworthy AI, particularly within regulated industries where explainability, traceability, and accountability are non-negotiable requirements.

Tools Are Not a Governance Model

If artificial intelligence depends on governed data, governance itself depends on something even more fundamental: organisational alignment.

The market is saturated with tools promising to “implement data governance” or “fix data quality.”

They cannot.

No tool, regardless of technical maturity, can replace a clear governance model, defined and documented processes, explicit ownership and accountability, and sustained executive sponsorship.

Data governance cannot be implemented as a big-bang initiative. It is a continuous transformation journey that requires awareness and sustained cultural alignment across business and technology functions.

In its early stages, governance does not require sophisticated platforms. Simple tools (e.g. spreadsheets, structured templates, shared repositories, and lightweight workflows) are often sufficient to establish core artefacts such as data ownership registers, data definitions, quality controls, and issue management processes. Clear and tangible objectives enable early wins, and early wins create engagement.

Governance succeeds or fails through people. A governance model only works when individuals align with it rather than resist it. A shared strategy across business, risk, IT, and data teams is fundamental. Frameworks provide structure, but people provide legitimacy, accountability, and execution capability.

Even in an era increasingly shaped by artificial intelligence, the human dimension remains decisive. As highlighted in Humanizing Data Strategy: Leading Data with the Head and the Heart (Tiankai Feng, 2024), effective data leadership requires balancing analytical rigour with empathy, communication, and organisational trust. Technology enables governance, but human alignment sustains it.

Different Industries, Different Starting Points

Outside the banking sector, many industries remain at earlier stages of data governance maturity. Paradoxically, this can be an advantage.

They are not burdened by legacy regulatory remediation programmes or overly complex historical architectures. They have the opportunity to learn from the banking sector’s experience, to understand both what worked and what proved excessively rigid or resource-intensive. They can avoid over-engineering governance too early and instead design operating models that balance control and value creation from the outset.

Banking, in contrast, represents a decade-long laboratory of governance implementation. It demonstrates the importance of formal ownership, auditability, data quality controls, and executive sponsorship. But it also illustrates the risk of allowing governance to become primarily compliance-driven.

More mature organisations now face a different challenge: revisiting established governance frameworks to reduce friction, clarify responsibilities, adapt to decentralised architectures, comply with evolving data protection and privacy regulations, and simultaneously enable innovation.

The next evolution of data governance is not about adding more controls, it is about making governance scalable, contextual, and strategically aligned.

From Compliance Discipline to Strategic Capability

Based on my experience working with data governance in financial institutions, one lesson stands out clearly: governance succeeds when it is embedded into decision-making, not when it exists as a parallel control structure.

Effective data governance should:

- Clearly define ownership without creating bureaucracy;

- Establish quality controls without slowing down innovation;

- Enable traceability while supporting agility;

- Align with business strategy rather than operate independently from it.

The banking sector has demonstrated that disciplined governance is possible at scale. The opportunity now, both within banking and across other industries, is to apply that discipline in a way that unlocks value rather than merely satisfies supervision.

Data governance should not be seen as a regulatory obligation or a technical programme. It is an organisational capability. When implemented with clarity, proportionality, and strong leadership, it becomes the foundation for trusted analytics, resilient operations, and responsible artificial intelligence.

Ultimately, governance is not about data alone. It is about how organisations build trust (internally and externally) in the information that drives their decisions.

References

Basel Committee on Banking Supervision. (2013). Principles for effective risk data aggregation and risk reporting (BCBS 239). Bank for International Settlements. https://www.bis.org/publ/bcbs239.htm

DAMA International. (2017). DAMA-DMBOK: Data management body of knowledge (2nd ed.). Technics Publications. https://dama.org/dmbok2r-infographics/

Deloitte. (2024). BCBS 239 benchmark survey 2024. Deloitte.

Enricher. (n.d.). The cost of incomplete data. https://enricher.io/blog/the-cost-of-incomplete-data

Feng, T. (2024). Humanizing data strategy: Leading data with the head and the heart. Technics Publications.

Gitnux. (n.d.). Compliance statistics. https://gitnux.org/compliance-statistics/

IBM. (n.d.-a). Data quality. https://www.ibm.com/think/topics/data-quality

IBM. (n.d.-b). Data governance. https://www.ibm.com/topics/data-governance

About the Author

Mauro Pereira is Data Governance & Analytics specialist at Ivy Partners. With a PhD investigating the relationship between cities and population health and a Master in Architecture, he brings spatial intelligence and systems thinking from urban planning to enterprise data management.

His background as Urban Planning Specialist and Business Analyst gave him the tools to navigate complexity across disciplines. He develops and maintains data governance policies, procedures, and standards while collaborating with cross-functional teams to ensure compliance and consistent practice. From SQL and Python to Power BI and GIS analysis, he combines technical capability with strategic thinking and a researcher’s discipline. Bridging governance and analytics, he applies data quality frameworks and validation guidelines to high-stakes environments, always seeking the best solution to reduce costs while maximizing results, guided by a commitment to sustainability and building data systems the right way.