Opinion Article by Sofiane Djerbi.

Banning ChatGPT in 2026 is like banning the internet in 1996. It is unrealistic, it hurts productivity, and it pushes usage to personal devices where risks compound silently.

Engineers paste code into Claude Code for review. Sales teams draft client emails with ChatGPT. Analysts use Gemini for summaries. Most of this happens through personal accounts on the corporate network, with no data protection, no policy enforcement, and no incident trail.

The first reflex has been to block. Add openai.com to the deny list. Push out a policy memo. Run DLP on outbound HTTPS. It has not worked.

Only 6% of organizations report complete visibility into AI usage (Cybersecurity Insiders, 2026). Netskope Report adds the supply side: 47% of workplace AI usage runs through personal accounts, not managed corporate ones.

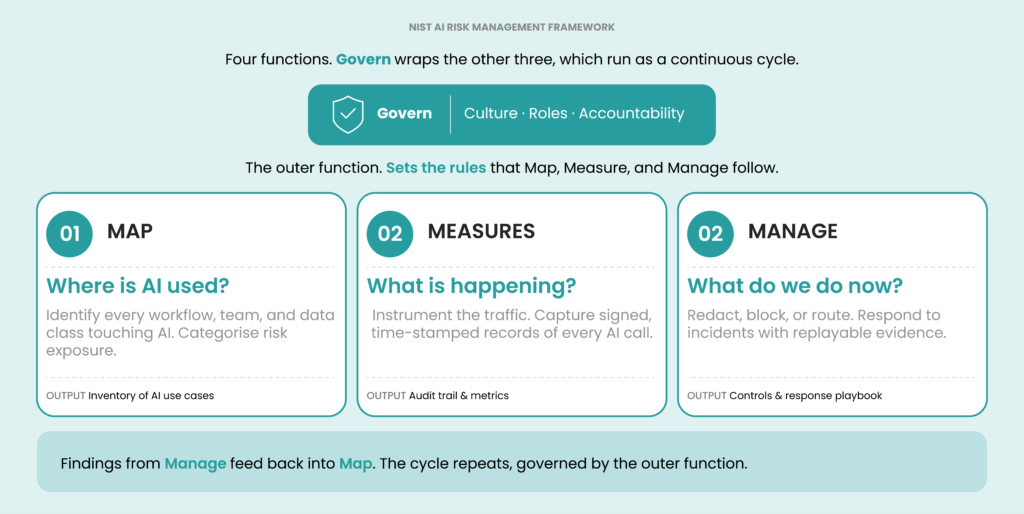

AI governance is rapidly converging around a shared idea: controls need to sit where AI traffic flows, not just in policy documents.

Frameworks such as NIST AI 600-1, ISO/IEC 42001, the EU AI Act, and Gartner’s AI TRiSM all differ in scope, but they point in the same direction.

Frameworks help but having them on paper is not the same as deploying them. Most enterprises with mature security operations are still behind their own employees on AI. The irony is that security controls are strongest where regulators focus, such as data residency, GDPR, and SOC 2; and weakest where AI is most heavily used: engineering, legal, finance, sales, and marketing.

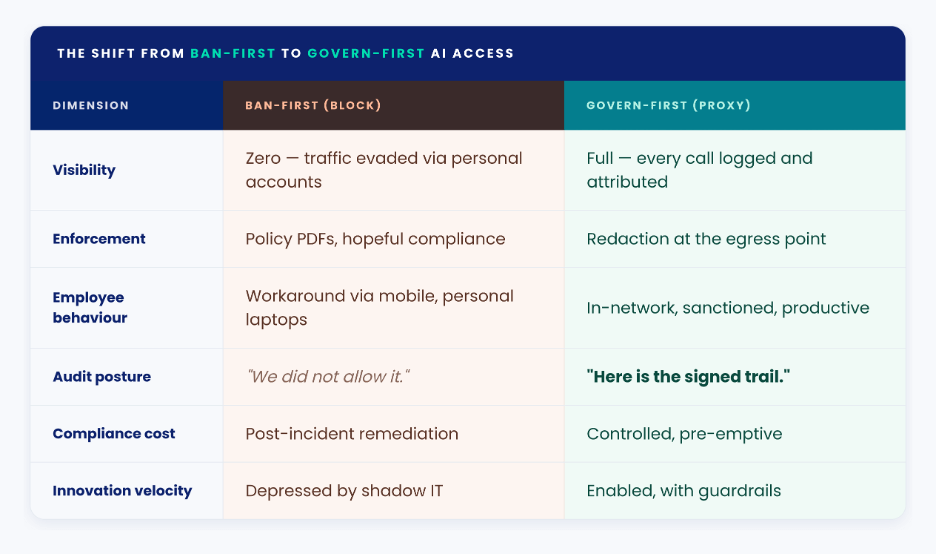

Govern-first does not mean reading every conversation. A well-designed AI gateway captures what it needs to prove control: who called which model, which policy fired, what was redacted, how much it cost. The rest is left alone. Prompt and response content sit in a separate, access-controlled store, and are only opened on a defined trigger, such as a security incident or a regulator request.

Shadow AI Is Not An Edge Case

According to Cyberhaven, 11% of what employees paste into external AI tools contains corporate secrets, from source code and pricing models to customer PII and deal terms. GitGuardian still finds API keys leaking through prompts. In most industries, adoption has outpaced policy. DevOps has seen this before. CI/CD pipelines changed software delivery around 2010 by making the safe path automatic. Developers didn’t stop risky behavior because of policy, they stopped because the system wouldn’t let untested code ship.

AI access in 2026 needs the same idea: enforcement built into the infrastructure, not documents people ignore.

Vendor-specific blocking doesn’t scale. People cycle through ChatGPT, Claude, Gemini, Copilot, and a growing list of vertical tools. Blocking one mostly just sends them to the next. The only place you can enforce policy across all of them is the egress point: wherever the request leaves your network.

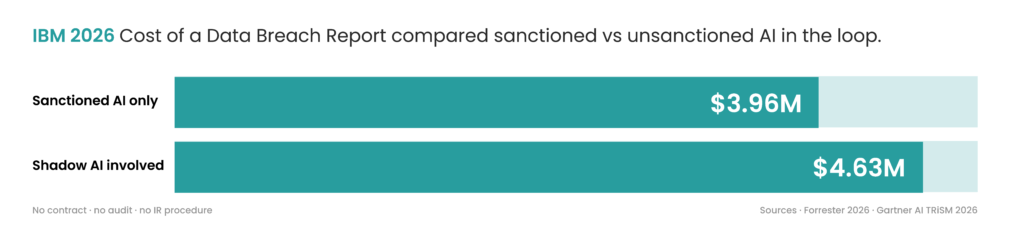

What Shadow AI Actually Costs

Unsanctioned AI adds about $670K to the average breach cost, per IBM’s 2026 Cost of a Data Breach Report. These tools have no contract in place, no audit trail, and no incident response procedure, so recovery and disclosure costs balloon.

That’s only the breach side. A growing academic literature has been chipping away at the same question. That’s only the breach side. Researchers have pulled megabytes of training data back out of ChatGPT for around $200 of API calls (Nasr & Carlini, 2023).

An AI gateway does cost real money. For a mid-sized organization, once you factor in the proxy, policies, and review processes, you’re typically looking at low six figures a year. But that’s still far less than the cost of a single breach caused by unsanctioned AI.

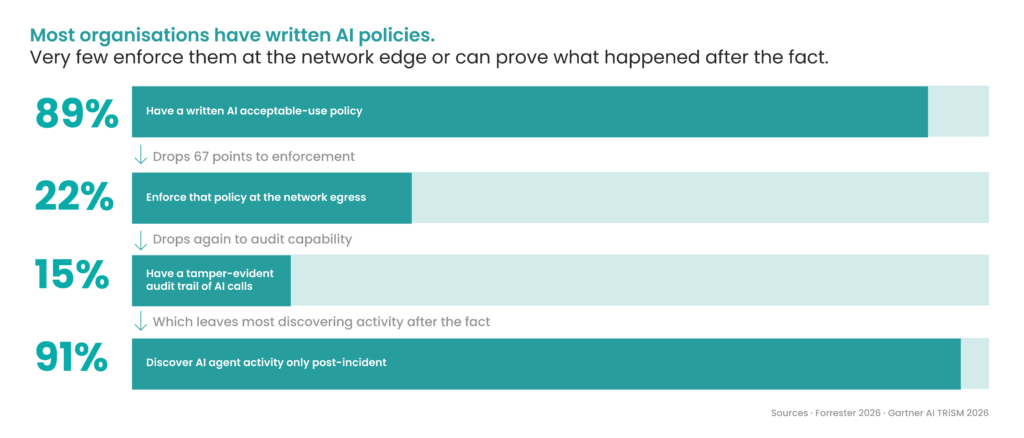

The Provenance Gap

Most organisations have written AI policies. Few can answer basic operational questions when something goes wrong. Which teams used which models last week. What data left the network through those calls. What decisions did AI agents take on the organisation’s behalf.

In most compliance setups, none of those can be answered confidently. Acceptable-use policies, vendor contracts, and security training don’t produce evidence after the fact.

This matches the survey data. Forrester’s State of AI Security 2026 reports that 91% of organisations discover AI agent activity only after the fact. Most have policy on file; what they don’t have is a way to test it. Incident response on AI traffic needs the same primitives any other service needs: signed events, time-stamps, replayable logs.

The Reverse Proxy Pattern

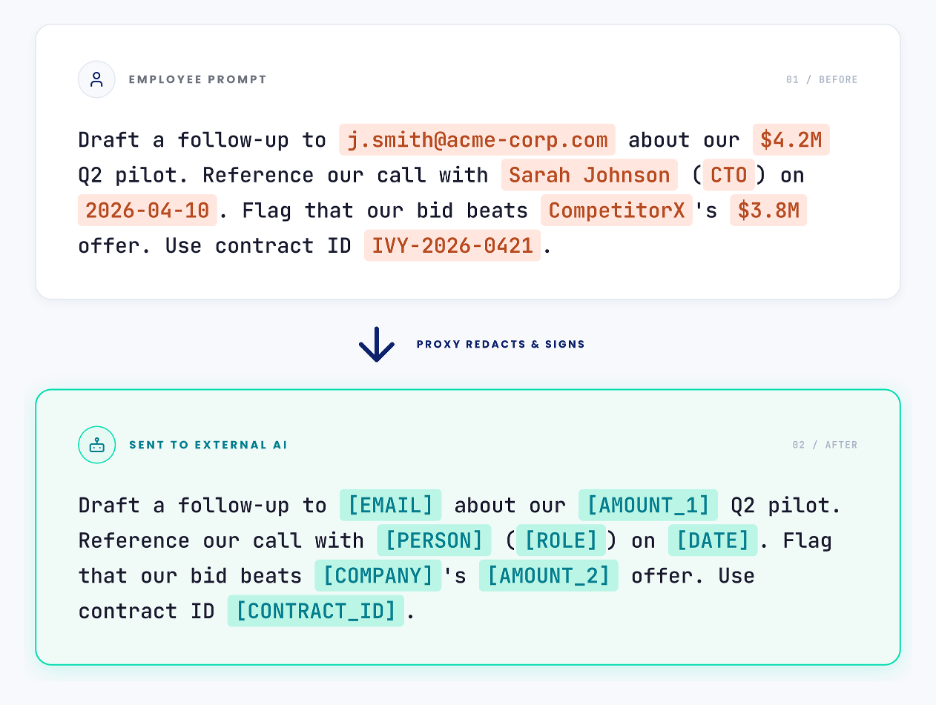

This pattern is familiar to anyone who has worked with web infrastructure. Instead of calling AI providers directly, internal clients such as employees, automation, or agents send requests through a central proxy.

That proxy enforces policy before anything leaves the network. It scans for sensitive data, redacts it before it leaves the network, and ensures only approved models and use cases are permitted.

There is nothing fundamentally new here. Like API gateways or service meshes, it is the same control model applied to AI. The main difference is that the data is free-form text instead of structured JSON. An employee submits a prompt containing sensitive data. Before it leaves the network, the proxy intercepts the request, redacts sensitive fields, and forwards a sanitised version to the external API. The model still gets usable context. A minimised record of the event, covering metadata, the policy that fired, and the redactions applied, is written to an audit log. The original prompt, if retained at all, is held in a separate encrypted store with role-based access, short retention, and four-eyes review before anyone can read it.

When To Use What

Not everything needs to go through the external proxy. A lot of what enterprise teams use AI for is fine on a self-hosted model. Drafting, summarising, Q&A, internal-code completion: Llama 3 or Mistral handles those well, with the prompt staying inside the network.

Routine work runs on private, self-hosted models. Fixed cost, data stays local, no egress. Frontier work uses public models like GPT-5 or Claude Opus, called through the proxy. PII redacted at the boundary, elastic billing.

Both sides are governed. What’s left is a model-quality and cost question, which is an easier conversation to have with finance and engineering.

A Proxy Is Not a Governance Model

A proxy alone is just infrastructure. Without clear ownership, policies, and operational responsibility, it becomes another unmanaged system.

You need a defined owner, usually security or platform engineering. You also need clear policies for handling PII, approved models, and an exception process. Because the proxy is now a production dependency, it requires an on-call rotation. Finally, you need observability, including usage by team, redactions, blocked requests, and cost tracking.

This is standard DevOps practice. The same operating model used for CI/CD, secrets management, and Kubernetes is what makes an AI gateway sustainable.

In the EU, this is also where data-protection and labour law sit down at the table. A gateway that routes employee AI traffic is processing personal data in an employment context, so it needs a lawful basis under GDPR Article 6, a clear purpose and retention period, a DPIA under Article 35, and, in most member states, consultation with the works council or employee representatives. Employees should be told, in plain language, what is logged, what is not, who can access it, and under what conditions. Done well, this makes the control easier to defend, not harder.

Where To Start

Banning AI at the enterprise level just pushes usage onto personal devices. The practical alternative is to treat AI traffic the way you treat any other production dependency: log the metadata, set policies, redact what shouldn’t leave the network, keep content capture minimal and purpose-bound, and put someone on-call for the gateway.

For organizations still figuring out where to start, ownership is the first thing to define: someone named, who’s accountable for AI access end-to-end. If nobody can be named today, the risk is already compounding quietly, and buying a proxy without an owner just adds another piece of infrastructure nobody is watching.

Name a data protection owner alongside the security owner. In Europe the two roles have to agree on scope before the proxy goes live.

References

Carlini, N., Tramer, F., Wallace, E., Jagielski, M., Herbert-Voss, A., Lee, K., Roberts, A., Brown, T., Song, D., Erlingsson, Ú., Oprea, A., & Raffel, C. (2021). Extracting training data from large language models. 30th USENIX Security Symposium (USENIX Security 21), 2633–2650.

Cheng, S., Liu, Y., Wang, S., & Zhang, T. (2025). Effective PII extraction from LLMs through augmented few-shot learning. 34th USENIX Security Symposium (USENIX Security 25).

Cyberhaven. (2026). AI safety & data risk report.

Forrester. (2026). The state of AI security.

Gartner. (2026). Strategic cybersecurity outlook and AI TRiSM framework.

IBM Security. (2026). Cost of a data breach report 2026.

Nasr, M., Carlini, N., Hayase, J., Jagielski, M., Cooper, A. F., Ippolito, D., Choquette-Choo, C. A., Wallace, E., Tramèr, F., & Lee, K. (2023). Scalable extraction of training data from (production) language models. arXiv preprint arXiv:2311.17035. https://arxiv.org/abs/2311.17035

National Institute of Standards and Technology. (n.d.). AI risk management framework (AI 600-1).

Netskope Threat Labs. (2026). Cloud & threat report.

Palo Alto Networks. (2026). State of AI security.

Wiz Research. (2026). Cloud strategy guide.

About the Author

Sofiane Djerbi is a DevOps Engineer at Ivy Partners with six years of experience building and automating scalable infrastructure. He specializes in managing complex environments using Kubernetes and Terraform, with a strong background in migrating legacy systems to modern, self-service platforms. At Ivy, Sofiane applies his expertise to maintain and optimize critical infrastructure within the high-stakes finance and trading sectors.