By Ricardo Sousa

By 2030, global data center energy consumption will double. In this IvyInsights piece, Ricardo Sousa examines what it takes to scale sustainably, from cooling innovation to machine learning optimization, and why the path to PUE 1.0 is a systems engineering challenge, not a single fix.

Data centers now consume more electricity than France. By 2030, that figure will double. The question facing the industry is clear: how do you scale to meet AI demand without breaking the grid, or the planet?

Every day, humanity generates approximately 400 million terabytes of data. Social media, AI workloads, IoT devices, e-commerce, and streaming services feed this torrent. Almost all of it ends up stored, processed, or transmitted through cloud infrastructures operated by global data center providers.

These providers rely on a vast network of facilities around the world, demanding near-continuous operation (close to 100% uptime) and consuming massive amounts of electricity to power storage systems, computing workloads, network infrastructure, cooling systems, and backup power conditioning.

According to the International Energy Agency (IEA, 2025), global electricity consumption from data centers reached approximately 415 terawatt-hours (TWh), representing about 1.5% of global electricity consumption. This figure has grown by 12% annually over the last five years. By 2030, consumption is projected to more than double to approximately 945 TWh, nearly 3% of global electricity, an increase of 15% per year from 2024 to 2030. That is more than four times faster than growth in electricity consumption across other sectors.

But electricity is only part of the story.

Water consumption represents another critical challenge. The average data center consumes 300,000 gallons of water per day, with consumption typically rising alongside energy needs. Cooling infrastructures, especially evaporative and water-based systems, require massive volumes. In water-stressed regions, this creates a sustainability tension that compounds the energy problem:

- High-performance AI clusters increase thermal density

- Higher thermal density requires more aggressive cooling

- More aggressive cooling often increases water consumption

Over the years, this growth has forced operators to confront a fundamental question: How can data centers scale to meet increasing demand while reducing operational costs and environmental footprint?

The answer lies in comprehensive efficiency optimization, from equipment upgrades to machine learning models that fine-tune cooling in real time.

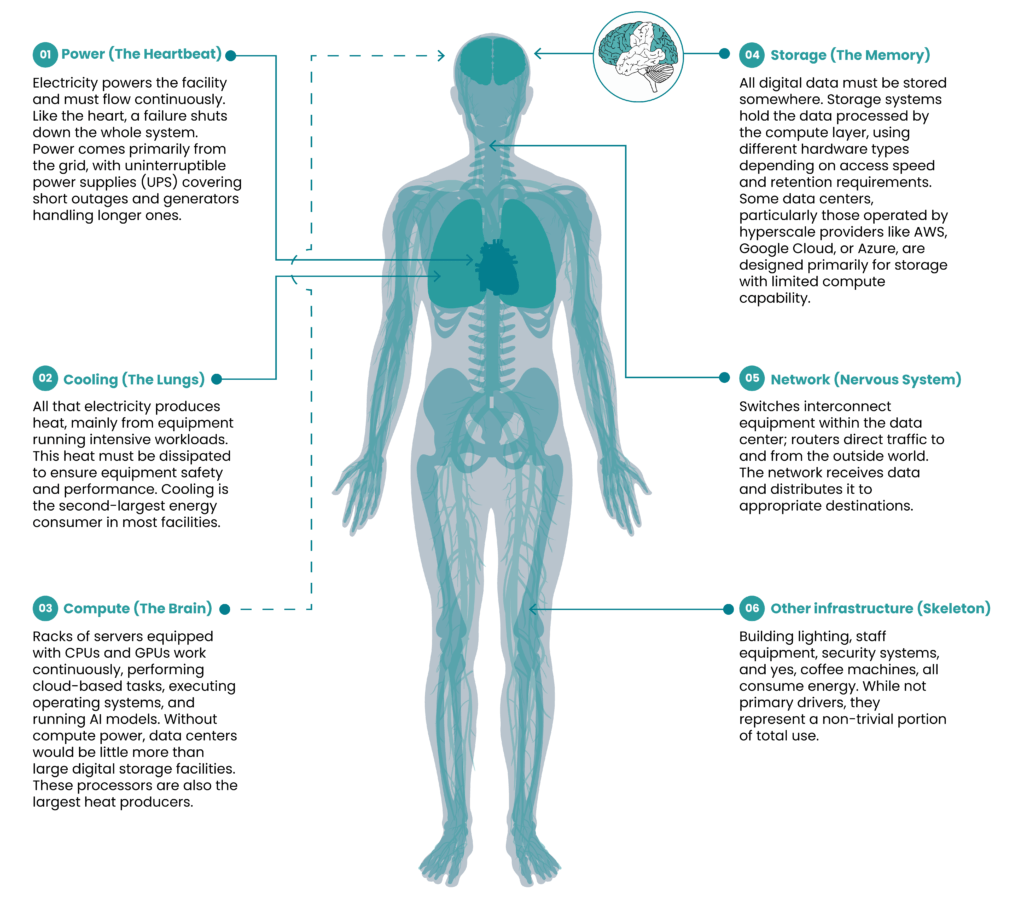

Anatomy of a Data Center

To understand how to optimize energy efficiency, you first need to understand what consumes the energy. A useful analogy, offered by Global Data Center Hub (2025), compares a data center to human physiology:

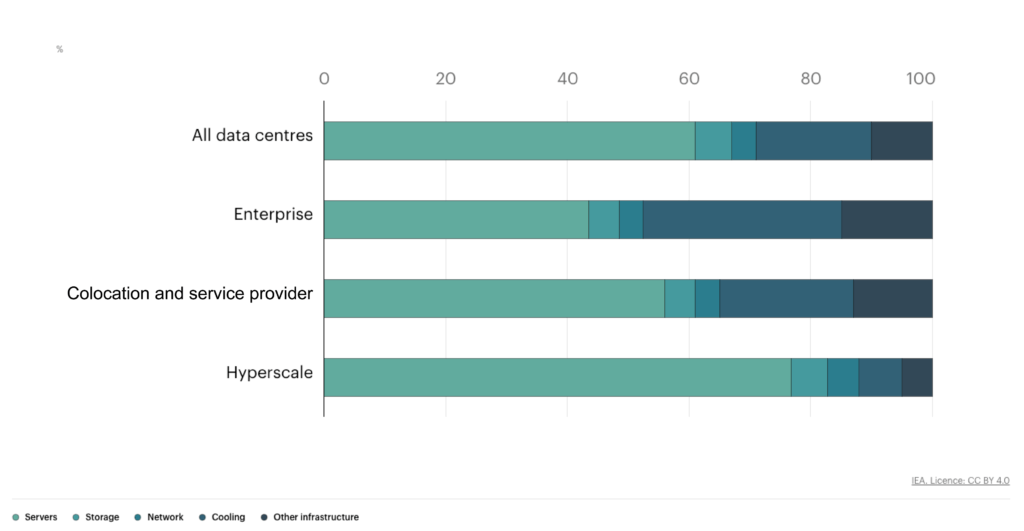

According to the IEA (2025), energy distribution varies by data center type, but a typical breakdown looks like this:

- Compute: 50-80% of total consumption (GPUs consume significantly more than CPUs for equivalent tasks)

- Cooling: 20-40% of total consumption

- Storage, network, and other infrastructure: 15-20% combined

This distribution reveals where optimization efforts should focus: compute and cooling account for the vast majority of energy use.

Measuring Efficiency: PUE and WUE

The industry relies on two key metrics to evaluate data center efficiency.

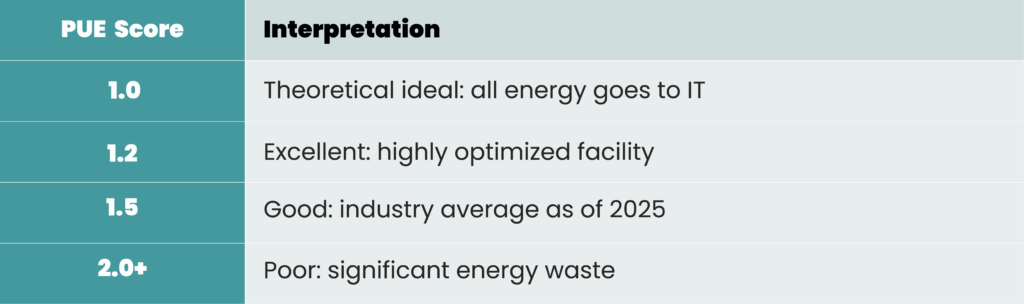

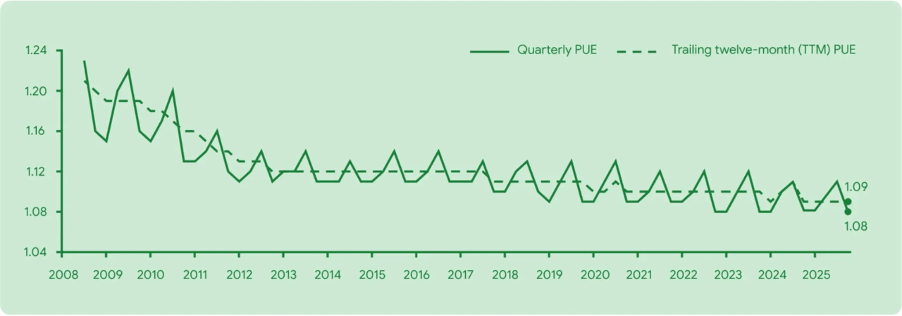

Powered Usage Effectiveness (PUE)

Created by The Green Grid in 2007, PUE measures how much energy is consumed by IT equipment compared with total facility consumption:

A perfect PUE of 1.0 means every watt feeds directly into IT equipment. Higher values indicate more energy lost to cooling and infrastructure overhead.

The industry average has improved dramatically, from approximately 2.5 in 2007 to around 1.56 today. Leaders like Google (1.09) and Amazon (1.15) have demonstrated that approaching 1.0 is achievable through deliberate optimization.

Water Usage Effectiveness (WUE)

Less discussed but equally important, WUE measures water consumption relative to IT energy:

As cooling systems evolve and water scarcity increases, WUE is becoming a critical companion metric to PUE. A facility might achieve excellent PUE through water-intensive evaporative cooling, only to face sustainability challenges in drought-prone regions.

The Business Case for Efficiency

Efficiency is not just an environmental concern. It is an economic imperative.

Lower PUE translates directly to reduced operating costs. A data center with a PUE of 1.5 spends 50% more on electricity than the theoretical minimum. At scale, this represents millions in annual savings.

Beyond cost, efficiency affects grid stability. The rapid growth of data centers, especially in concentrated areas, strains electrical infrastructure. Grid operators must invest significantly to meet demand. In some regions, new data center projects face delays or restrictions because the grid cannot support them.

Efficiency also affects water availability, carbon emissions, and corporate sustainability commitments. For operators serving clients with ESG requirements, demonstrable efficiency becomes a competitive advantage.

Five Optimization Strategies

1. Cooling Transformation

Cooling has been the primary focus for PUE reduction. It directly affects both energy consumption and equipment performance.

Air Cooling: The traditional approach. HVAC systems, fans, and vents circulate cold air and expel heat. Still used in facilities with moderate equipment density, but struggles with high-performance AI workloads. Air cooling often creates over-chilled or under-chilled zones with poor airflow.

Hot/Cold Aisle Containment: A layout improvement that physically separates equipment racks into alternating rows. The cold aisle supplies cool air to the front of equipment; heat exhausts through the rear into the hot aisle, where it is extracted. This prevents mixing and improves efficiency without changing cooling technology.

Liquid Cooling: Now standard in high-density environments. Direct-to-chip cooling circulates liquid to CPUs and GPUs, removing heat far more effectively than air. Immersion cooling goes further, submerging entire servers in non-conductive fluid.

Free Cooling: Where environmental conditions permit, facilities can use outside air or natural water sources for cooling, dramatically reducing energy consumption. This is why location matters.

A well-known example is Google’s data center in Hamina, Finland. The facility uses seawater from the Gulf of Finland for cooling. Instead of relying on energy-intensive chillers, it circulates cold seawater through heat exchangers to dissipate heat from servers. The water returns to the sea without chemical alteration and within regulated temperature limits. This design significantly reduces mechanical cooling needs and overall electricity consumption.

The Nordic region, particularly Finland and Sweden, hosts several hyperscale data centers precisely because cold ambient air is available for much of the year.

2. Data Driven Monitoring

You cannot optimize what you cannot measure.

Data Center Infrastructure Management (DCIM) platforms provide real-time visibility into facility operations. With proper IoT infrastructure, operators can monitor energy consumption, temperature, humidity, and workloads at the equipment level. This helps identify faults, load imbalances, and optimization opportunities in real time.

But monitoring alone is not enough. The data must be processed, cleaned, and transformed into actionable insights. Dashboards that display real-time PUE, track trends over time, and alert operators to anomalies turn raw sensor data into operational intelligence.

Without DCIM, identifying inefficiencies becomes guesswork.

3. Machine Learning and AI Optimization

Here is the irony: AI workloads are driving the surge in data center energy demand, but AI is also one of the most powerful tools for reducing it.

Machine learning models can optimize cooling systems by analysing temperature, humidity, and airflow data in real time, adjusting parameters to match actual needs rather than worst-case assumptions. If a model detects that a server room area is colder than necessary, it can automatically raise the cooling setpoint.

A landmark example: In 2016, Google’s DeepMind applied machine learning to cooling optimization in Google’s data centers. The result was a 40% reduction in cooling energy, contributing to a 15% reduction in overall PUE. The system analyses thousands of sensors and adjusts hundreds of variables every five minutes.

Predictive models also help prevent equipment malfunctions, schedule maintenance to minimize downtime, and forecast weather conditions for facilities using free cooling.

The techniques are proven. What matters now is deployment at scale.

4. IT Infrastructure Optimization

As the largest energy consumer, IT infrastructure offers significant optimization potential.

Hardware Selection: Newer equipment is more efficient. Current-generation GPUs can deliver twice the performance of their predecessors, meaning the same workload requires half the hardware, and half the energy. Manufacturers increasingly include built-in optimization software, reducing the need for custom solutions.

Virtualization: Running multiple applications on the same physical servers improves utilization and reduces idle equipment. Proper workload balancing and dynamic resource allocation yield substantial efficiency gains.

Power Distribution: Intelligent Power Distribution Units (PDUs) help identify and manage equipment workloads, distributing energy to where it is most needed and flagging optimization opportunities.

5. Renewable Energy Integration

Reducing consumption is one approach. Generating clean energy is another.

On-site solar and wind installations, where location permits, reduce grid dependence and can provide significant electricity generation. Battery storage allows excess production to be saved for peak demand periods.

For facilities where on-site generation is impractical, Power Purchase Agreements (PPAs) provide access to renewable energy at fixed long-term prices. This reduces cost unpredictability while meeting sustainability commitments.

Microsoft, Google, and Amazon have all made substantial investments in renewable energy, both to power their data centers and to offset emissions from facilities that still rely on grid power.

Planning for Efficiency: New Builds

For data centers still in planning and design phases, efficiency decisions made early have outsized impact.

Location Selection:

- Access to reliable electrical grid and fibre-optic connectivity is mandatory

- Cooler climates reduce cooling costs (Nordic countries, parts of Canada)

- Access to water sources enables liquid cooling options

- High solar or wind availability supports on-site generation

- Low-risk geography (avoiding flood zones, earthquake-prone areas) reduces operational risk

Facility Layout:

- Hot/cold aisle containment should be designed in, not retrofitted

- Airflow patterns must account for equipment heat generation

- Space for future liquid cooling infrastructure, even if not immediately deployed

Equipment Selection:

- Prioritize energy-efficient hardware with built-in optimization

- Plan for GPU-heavy workloads if AI is part of the roadmap

- Consider total cost of ownership, not just purchase price

Operating for Efficiency: Existing Facilities

For operational data centers, efficiency is an ongoing discipline.

Continuous Monitoring: DCIM platforms should track PUE and WUE in real time, with alerts for anomalies and trend analysis for long-term planning.

Regular Audits: Periodic assessments identify optimization opportunities that real-time monitoring might miss: outdated equipment, suboptimal configurations, or processes that have drifted from best practice.

Staged Upgrades: Not everything can be replaced at once. Prioritize upgrades by impact: cooling systems and high-consumption compute equipment typically offer the best returns.

ML Deployment: If not already in place, machine learning optimization for cooling represents one of the highest-impact investments available.

Conclusion

Improving energy efficiency in data centers is not a single optimization problem. It is a systems engineering challenge.

Advances in cooling, machine learning, and hardware design offer measurable gains, but each solution introduces new constraints and trade-offs. Liquid cooling reduces energy consumption but may increase water usage. AI optimization requires upfront investment and operational expertise. Renewable energy depends on location and grid infrastructure.

The next phase of efficiency will depend less on isolated improvements and more on how well operators integrate electrical, thermal, and digital layers into a coherent optimization framework.

Each data center has its own constraints and opportunities. But the collective goal is clear: scale to meet demand while approaching a PUE of 1.0 and minimizing environmental impact.

The technology exists. The economics are compelling. What remains is execution.

References

Basel Committee on Banking Supervision (2013). Principles for Effective Risk Data Aggregation and Risk Reporting (BCBS 239).

International Energy Agency (2025). Energy and AI. Paris: IEA. Available at: https://www.iea.org/reports/energy-and-ai

International Energy Agency (2025). Share of electricity consumption by data centre and equipment type, 2024. Available at: https://www.iea.org/data-and-statistics/charts/share-of-electricity-consumption-by-data-centre-and-equipment-type-2024

Google (2025). Data Center Efficiency. Available at: https://datacenters.google/efficiency/

Google Sustainability (2025). Environmental Report. Available at: https://sustainability.google/reports/

Amazon Web Services. Sustainability: Data Centers. Available at: https://aws.amazon.com/sustainability/data-centers/

Global Data Center Hub (2025). What’s Inside a Data Center: The 5 Key Components. Available at: https://www.globaldatacenterhub.com/p/whats-inside-a-data-center-the-5

ASHRAE. Technical Resources: Standards and Guidelines. Available at: https://www.ashrae.org/technical-resources/standards-and-guidelines

DeepMind (2016). DeepMind AI Reduces Google Data Centre Cooling Bill by 40%. Available at: https://deepmind.com/blog/article/deepmind-ai-reduces-google-data-centre-cooling-bill-40

Lawrence Berkeley National Laboratory. PUE: A Comprehensive Examination of the Metric. Available at: https://datacenters.lbl.gov/

IBM. Big Data Analytics. Available at: https://www.ibm.com/think/topics/big-data-analytics

About the Author

Ricardo Sousa is a Data Scientist Engineer at Ivy Partners, with four five of experience applying machine learning and data analytics to drive decision-making in the energy and insurance industries. With a strong background in statistical modeling, predictive analytics, data engineering and data visualization, Ricardo has worked on projects ranging from optimization of energy efficiency in data centers to optimization of power plants and claims automation. Passionate about using data and statistics for impact, Ricardo brings a practical, results oriented approach to solving complex business challenges through AI and data-driven solutions.